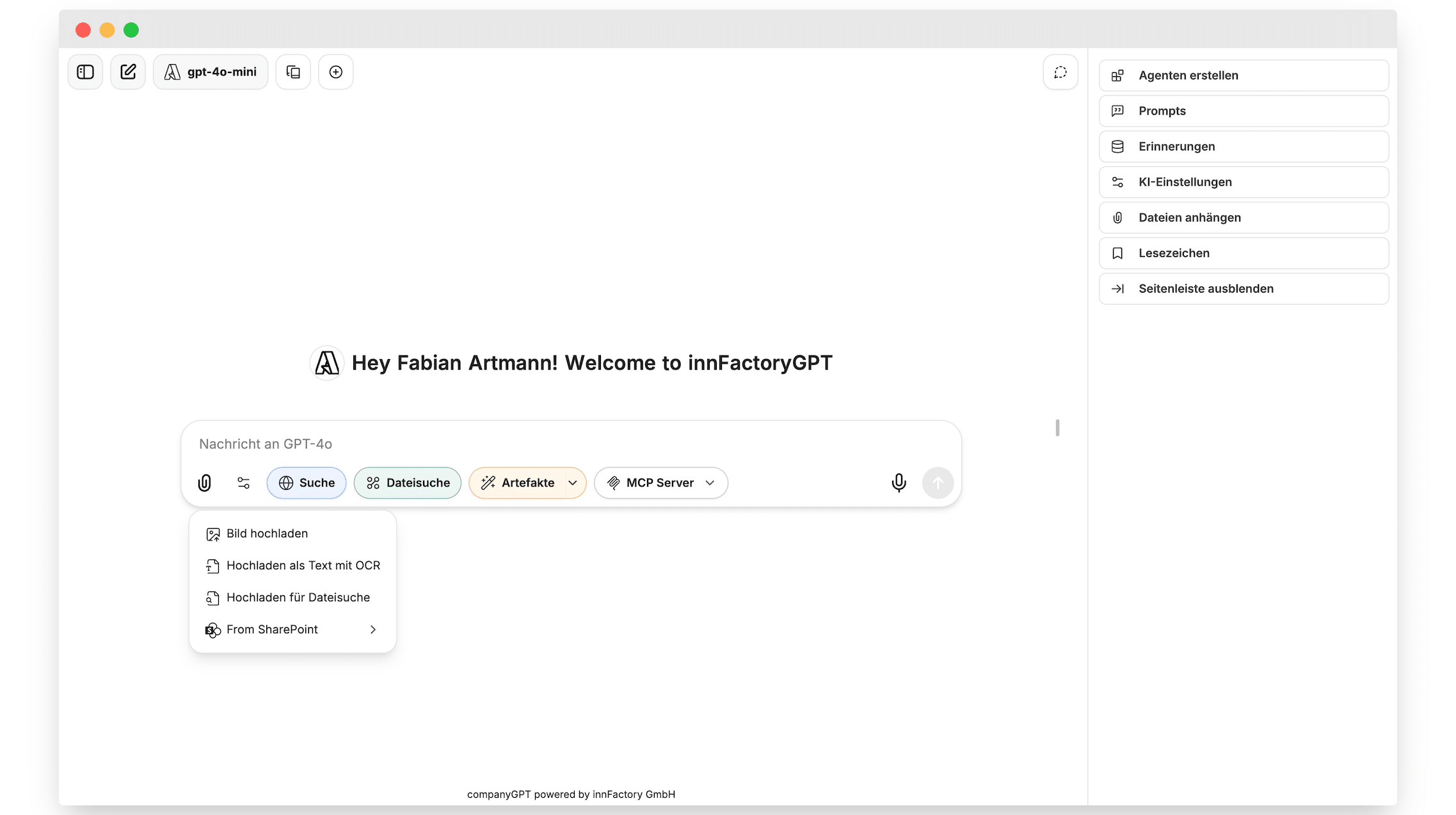

CompanyGPT Cloud Stack: Enterprise AI in Your Own Azure Tenant

Why most private AI platforms fail before they reach production

Most companies that try to build a private AI platform do not get stuck on the model. They get stuck on operations. Authentication, permissions, private connectivity, compliance, updates: this is where a proof of concept turns into a never-ending project. With CompanyGPT, which we deliver together with innFactory AI Consulting, we have already paved that road cleanly. This post walks through the cloud stack behind it.

The architecture at a glance

CompanyGPT runs entirely inside the customer’s Azure tenant. We do not deliver SaaS. We deliver a reproducible platform that gets deployed into your subscription via infrastructure as code. The result: you keep data sovereignty, we handle setup, updates, and hardening.

Core components:

- Azure Kubernetes Service (AKS) as the runtime for all containers

- Azure Database for PostgreSQL as the relational database

- Azure Cosmos DB (MongoDB API) for document-oriented workloads

- Private endpoints for every database and storage connection

- Azure AI Foundry for OpenAI models in the customer’s region

- Google Vertex AI (now Google Agents Platform) for Anthropic Claude and Gemini

- AWS Bedrock and STACKIT Model Serving as optional multi-cloud paths

- Microsoft Entra ID for SSO and token propagation to MCP servers

Azure Kubernetes Service as the central runtime

We deliberately use AKS instead of Container Apps or classic VMs. The reason is the mix of workloads: frontend, API, workers, vector indexer, RAG connectors, MCP servers, and n8n run side by side with very different scaling profiles. Kubernetes provides standardization through Helm charts, clean networking through network policies, and a unified operator layer for logging and monitoring.

The AKS cluster runs with Azure CNI Overlay, is privately reachable only, and uses Workload Identity. Pods access Azure resources without secrets, exclusively via federated credentials. This removes an entire class of leaks.

Databases behind private endpoints

PostgreSQL holds structured data: users, conversations, audit logs, agent configurations. Cosmos DB with the MongoDB API stores document-oriented content like memory entries, RAG metadata, and unstructured vector companion data. Both databases are reachable exclusively through private endpoints. There is no public DNS record and no public access path.

In practice this means:

- Databases are not visible on the internet, not even to management tooling

- Connections run through private DNS zones inside the customer VNet

- Backup, failover, and geo-redundancy stay available through native Azure features

- Compliance audits see exactly one network boundary, not dozens

For customers with elevated sovereignty requirements we add Customer Managed Keys backed by Azure Key Vault. Models and vector indexes can additionally move to STACKIT if a German sovereign cloud is required.

Terraform and GitHub Actions: everything traceable

The entire infrastructure is expressed as Terraform modules. Every subscription, VNet, AKS node pool, private DNS zone, and role gets provisioned as code. Application releases use versioned Helm charts.

GitHub Actions orchestrates everything. Pull requests trigger plan runs against the target environment, reviews enforce four-eyes, apply only runs after approval. The benefits:

- Every platform change is traceable in the git history

- Rollbacks work through revert, not through manual clicking sessions

- Security patches for base images or Helm charts ship through automated pipelines

- Renovate updates dependencies continuously, no drift stays hidden

This keeps the platform up to date without open security gaps. We rely on modern DevSecOps mechanisms: SBOM generation, container scanning with Trivy, policy checks via OPA, and signed artifacts via Sigstore.

TD SYNNEX: Microsoft sourcing without vendor management

As an indirect Microsoft reseller via TD SYNNEX we handle the commercial side as well. Customers do not negotiate their own Microsoft CSP contract, do not extend Enterprise Agreements, and do not process separate invoices. We deliver subscription, consulting, implementation, and optional managed services from a single hand. See our post on billing GitHub through Azure for context.

Multi-cloud at the model layer

Model providers are no longer a monopoly. We built CompanyGPT so that the model layer stays interchangeable:

- OpenAI models like GPT-4o and GPT-5 run via Azure AI Foundry inside the tenant

- Anthropic Claude and Google Gemini ship via the Google Agents Platform, formerly Vertex AI

- AWS Bedrock adds Llama, Mistral, and additional models

- STACKIT Model Serving provides Llama, Gemma, and GPT-OSS on a German sovereign cloud

The result is the best of all worlds: latency and data residency from Azure, model variety from GCP, sovereignty from STACKIT, specialty models from AWS. The user picks per request, not the platform operator per quarter.

Custom MCP servers for real Microsoft 365 integration

This is where it gets interesting. Open source delivers the foundation of CompanyGPT, but enterprise requirements get solved through our own extensions. At the center are our MCP servers, which implement the Model Context Protocol and reach deep into Microsoft 365.

The most important example is the RAG service for SharePoint. When a user asks a question in CompanyGPT, we propagate the Entra token down to the MCP server. The MCP server then accesses SharePoint with the user’s own token. The implications:

- The AI sees only documents the user is already allowed to see

- SharePoint permissions stay fully intact

- There is no service account collecting broad read permissions

- Microsoft 365 audit logs show the real user, not a technical bot

You will not find this kind of integration in the open source world. It only emerges when you think about identity, tokens, MCP, and Microsoft Graph as one system.

Additional MCP servers cover Outlook, Teams, OneDrive, and customer-specific line-of-business systems. Each server follows the same pattern: Entra token in, permissions checked, contextualized data out.

What customers get

From a customer perspective the picture is clear:

- A private AI platform in their own tenant without vendor lock-in

- Full GDPR and EU AI Act compliance through European regions and sovereign options

- No license fees, only infrastructure and token usage

- Continuous updates and hardening through DevSecOps pipelines

- Deep Microsoft 365 integration with correct permission propagation

- Multi-cloud model selection across AI Foundry, Google Agents, Bedrock, and STACKIT

Conclusion

CompanyGPT is not another ChatGPT clone. It is an enterprise platform that brings cloud engineering, identity, compliance, and AI together. The cloud stack with AKS, PostgreSQL, Cosmos DB, private endpoints, Terraform, and GitHub Actions is exactly what we build in other enterprise projects. The AI layer and deep Microsoft 365 integration sit on top.

If you are considering building your own AI platform, talk to us before reinventing the wheel. We know the pitfalls and have already paved the road.

Request a demo or jump straight to our cloud services.

Tobias Jonas

Tobias Jonas